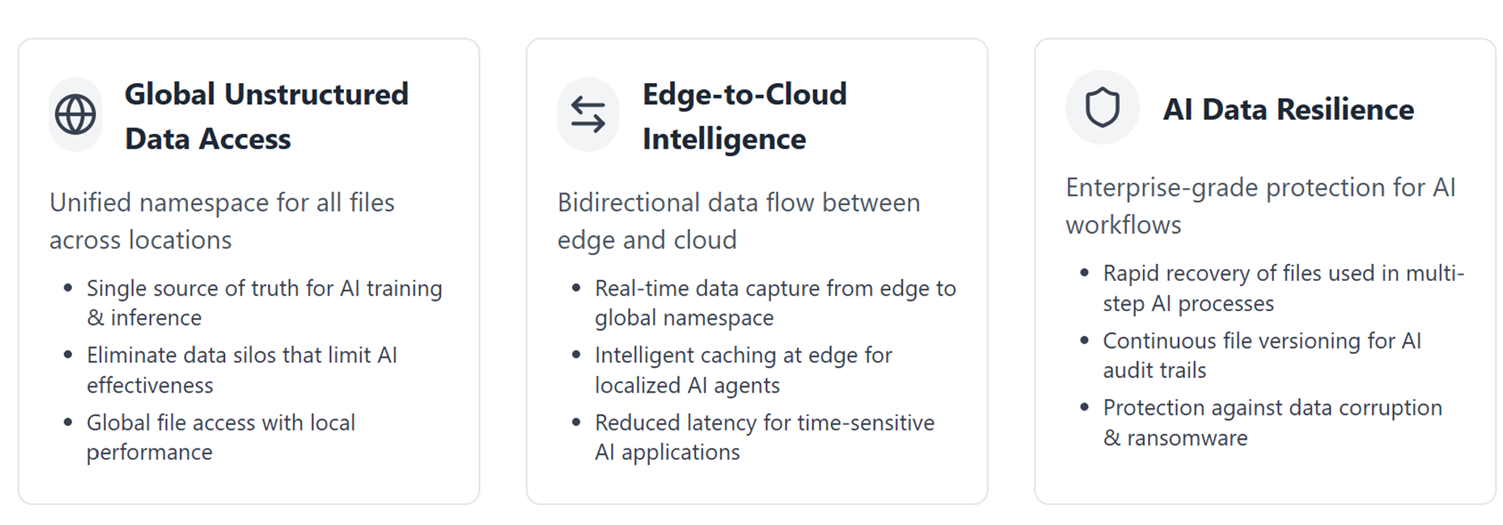

The Three Non-Negotiable Pillars of AI Data Infrastructure

Jim Liddle outlines the pillars of AI data infrastructure: unstructured data access, edge-to-cloud intelligence, and data resilience.

July 22, 2025 | Jim Liddle

It’s starting to become obvious that most AI initiatives fail because the underlying data infrastructure can’t deliver what AI actually needs.

This is because companies assume they can leverage their existing file infrastructure for their AI initiatives, but the reality is that there are three fundamental pillars that will determine whether an AI strategy initiative will thrive or barely survive. In this blog, I’ll go over each in depth.

Pillar 1: Global Unstructured Data Access

AI models are only as good as the data they can access, and most unstructured file data looks like a Jackson Pollock painting splattered across NAS islands, cloud buckets, edge devices, and regional file servers.

Why This Matters for AI

When your training data lives in umpteen different silos, you’re not providing joined up context to AI. Instead, you’re providing narrow views of data that each see a fraction of the reality of your business.

The technical requirement here is straightforward but challenging: a unified global namespace that presents all unstructured data as if it were local, regardless of where it physically resides. This isn’t just about convenience, it’s about AI effectiveness.

Let’s consider what happens without this:

- Storage Architects waste 60-80% of their time on data integration instead of AI use cases.

- Retrieval Augmented Generation initiatives provide less qualitative answers because they don’t have access to all the data sets to provide better context.

- Inference at edge locations fails because you can’t easily get access to data in different company locations

- Version control curation becomes a nightmare when the same file has different versions in different locations

The unified namespace solves this by creating a single source of truth. But, it must also deliver local performance globally. Data has gravity, so the data still needs to satisfy the 80% use case which is being where and when it’s needed. The physics of data movement hasn’t changed just because we’re doing AI now!

Pillar 2: Edge-to-Cloud Intelligence

The second pillar addresses, in my opinion, a fundamental mismatch in AI architectures: The freshest, most valuable data is being generated at the edge but needs to be accessible to cloud-based AI services on-demand.

The Bidirectional Challenge

This isn’t just about pushing data in one direction. Real global data architectures need the following.

Edge → Cloud: Fresh data needs to flow continuously into the global namespace where it can be accessible to AI solutions and services. You can’t just sync ‘everything,’ as that would overwhelm your WAN and cloud egress budgets. The edge infrastructure has to be smart enough to only coalesce the changes.

Cloud → Edge: Data needs to be intelligently cached at edge locations, as needed, whether this is for local inference access or for employee and application access. The technical requirement is intelligent, policy-driven caching that understands data access patterns and AI workload requirements. This means:

- Automatic tiering that keeps hot data local without manual intervention and tiers off new or changed data back into the global namespace once it is no longer actively being worked on.

- Real-time edge synchronization for data that needs to exist in several locations

In an agentic future, one can imagine the scenario of data being generated in multiple edge locations that needs to be pinned locally to a particular edge (as that is where the local agentic workflow needs to access it for its multi-step workflow). This scenario is very real and requires both the global namespace and the intelligent edge architecture to work seamlessly.

Pillar 3: AI Data Resilience

Agentic AI workflows can be incredibly fragile from a data perspective. They involve multi-step pipelines. And, as these agents get bedded down in front and back-office value chains, disruption though threat actors can have a huge impact on a company’s ability to operate.

Beyond Traditional Backup

Traditional backup and restore capabilities don’t cut it for AI workloads. Here’s why.

Ransomware Reality: AI has made data even more attractive to ransomware targets because the data is often irreplaceable and critical to operations.

Recovery Time: When a real-time business process that is fulfilled through an AI Agent is impacted, you need to be back online as quickly as possible. The days, weeks, or months restore options from a more traditional backup solution are not an option.

The technical requirements for AI data resilience include:

- Continuous versioning with instant rollback capabilities

- Immutable snapshots that ransomware can’t encrypt

- Granular recovery that doesn’t require full dataset restores

- Full Audit trails and recovery reports for Auditors and Legal

- Geographic edge distribution to enable the business to continue operating

This isn’t paranoia; it’s pattern recognition. The world has recently seen too many companies derailed by data loss that “couldn’t happen.”

The Integration Imperative

These three pillars aren’t independent. They’re interlocking requirements that reinforce each other:

- Global namespace enables edge-to-cloud intelligence by providing the unified view that intelligent caching requires

- Edge-to-cloud intelligence makes global access performant by ensuring data locality

- Data resilience protects both the global namespace and the edge intelligence layer

Together, these components create an infrastructure that can actually support AI at scale.

The Hard Truth

Building this infrastructure isn’t trivial; it simply requires rethinking how you approach unstructured file data storage. Traditional NAS wasn’t designed for globally distributed AI workloads. Cloud-only approaches can’t handle edge latency requirements. Hybrid provides the best of both worlds.

If you’re serious about AI delivering business value, and not just demo value, these pillars aren’t optional. They’re the data foundation that determines whether your AI initiatives will scale beyond pilot projects.

Lastly, the organizations succeeding with AI aren’t necessarily the ones with the best data scientists or the biggest GPU clusters. They’re the ones that recognized early on that AI is, fundamentally, a data infrastructure challenge. They built these three pillars first, then layered AI on top of a solid foundation. The question isn’t whether you need these capabilities. The question is whether you’ll build them before or after your first major AI use case failure.

Beyond the Prompt is where vision meets velocity. Authored by Jim Liddle, Nasuni’s Chief Innovation Officer of Data Intelligence & AI, this thought-provoking series explores the bold ideas, shifting paradigms, and emerging tech reshaping enterprise AI. It’s not just about chasing trends. It’s about decoding what’s next, what matters, and how data, infrastructure, and intelligence intersect in the age of acceleration. If you’re curious about where AI is going — and how to get ahead of it — you’re in the right place.

Related resources

Data foundation for AI

Nasuni’s hybrid cloud platform capabilities enhance your AI data strategy through consolidating, understanding, and leveraging your data.

Learn more

June 19, 2025 | Jim Liddle

Data Silos Are Killing Your Employee Collaboration (And Your AI Strategy)

In his new Beyond the Prompt series, Jim Liddle discusses how data silos impact enterprise AI strategies… and how to get around them.

Read more

Webinar

Modernising File Storage Infrastructure in the Age of AI

Discover how evolving business demands and the rise of AI are reshaping file storage needs.

Learn more